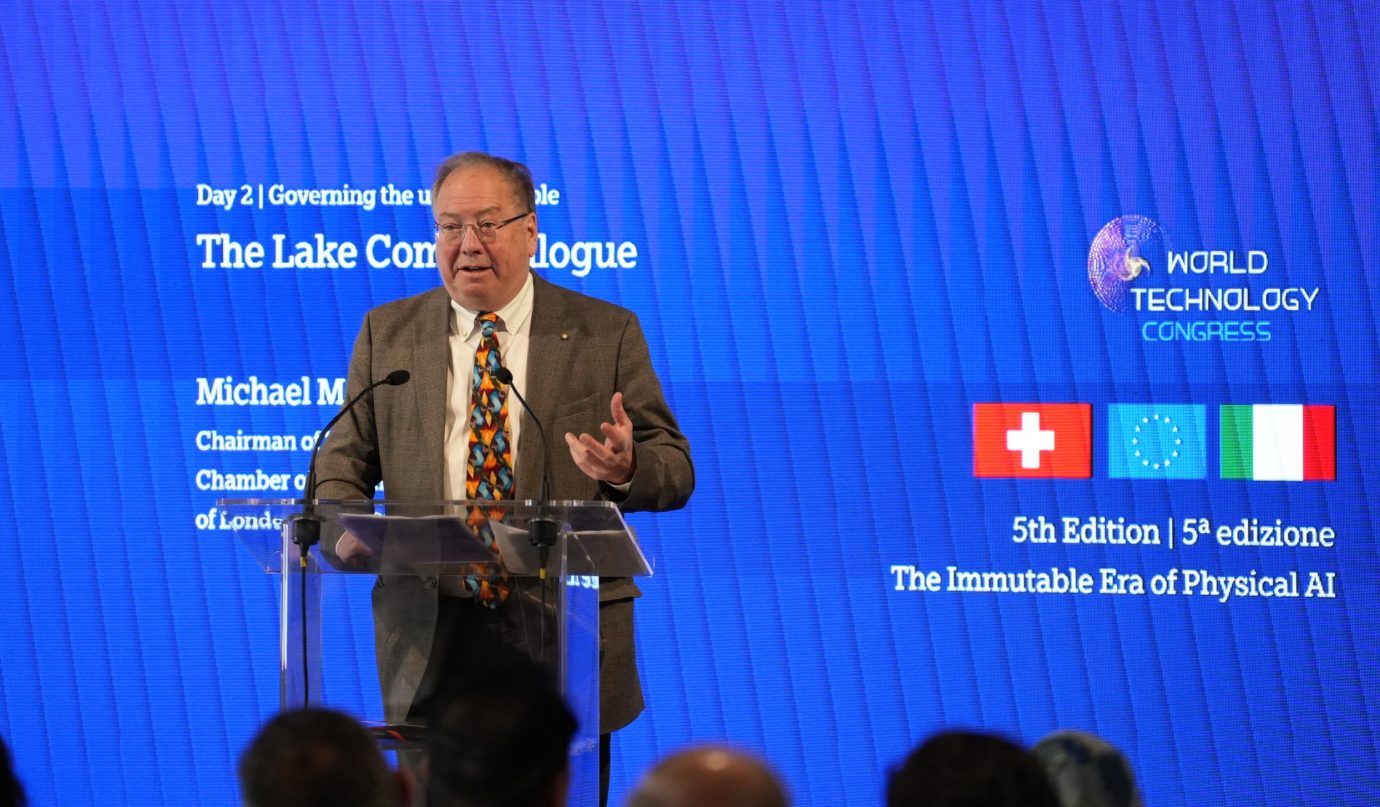

Professor Michael Mainelli

World Technology Congress,

Thursday, 23 April 2026

Lake Como, Italy

- The Ethics Manual We Never Wrote

Distinguished guests, ladies and gentlemen,

I want to begin with a confession. I came to Lake Como this week telling myself it was a professional duty. But with this weather and this location, I have since revised that position. The view is magnificent, but the problem we’re here to discuss is also extraordinary: we have built the most powerful cognitive tools in human history, and we shipped them without an ethics manual.

We didn’t forget the manual; we decided values were someone else’s department. Probably Legal, possibly Marketing, but definitely not Engineering. Why not wait for the regulators?

I’ve been around – large-scale neural networks in the 1970’s, expert systems and Smalltalk in the 1980’s, support vector machines in the 1990’s; defence, finance, medicine, marketing AI systems, almost 50 or so operational ones; and a good spread of USA, Germany, France, Ireland, Italy, China, and other countries.

My one-sentence provocation: we are very good at making AI faster, cheaper, and more ubiquitous. We are much less good at making AI good. In the physical world — where AI drives your car, monitors your heartbeat, and decides whether to open a factory gate — ‘not good’ stops being a philosophical debate and starts being a coroner’s problem.

2. The Garnish of Ethics

“Ethical AI” has become what “sustainable” was twenty years ago: a reassuring adjective that makes everyone feel better while changing very little. We slap it on the label, ship the product, and collect the award at the gala dinner.

AI systems don’t have values; they have objectives. And objectives, without values, are just very fast optimizations toward goals that nobody checked were actually any good.

Volkswagen optimized for emissions tests. Facebook optimized for engagement. Both achieved their objectives brilliantly. Both produced outcomes we now describe as somewhere beyond unfortunate and approaching catastrophic.

Now imagine that same logic embedded in a physical robot in a care home. If it optimizes for ‘patient throughput’ or ‘staff convenience’ rather than ‘human dignity,’ you haven’t built a caregiver; you’ve built a high-speed assembly line for the elderly. The gap between the objective and the value is where civilizations go wrong. That gap is now wired into our robots.

Have you ever thought about the ethics of fortune tellers? They do exist and they are serious. Some of them apply to economists. John Kenneth Galbraith is quoted saying, “The only function of economic forecasting is to make astrology look respectable.” A similar sentiment goes “Forecasters are like astrologers, they can tell you what will happen, or when, but never both”.

A true story from 2024 when ChatGPT mania was at its height – is that a friend of mine was seeking to plan his forthcoming retirement. He had an inspired idea to help him plan. He asked an AI to write his obituary. It wrote a great obituary, including the many great things he would do after leaving work, complete with his date of death. Now he lives in fear the AI will somehow make good its prediction. This AI needed a fortune teller’s ethical code.

3. From Primordial Ooze to Intelligent Design

We are currently in what I call the “Primordial Ooze” stage of AI development. We train our foundation models on the entire internet and hope they absorb values by osmosis. To put it technically: that is bonkers.

The internet contains the full spectrum of human behavior: heroic and vile, wise and deranged. Training on it produces a system that reflects that spectrum. You don’t get values for free; you have to put them in.

Consider the statistical reality: if 90% of your training data is unfiltered digital backwash, your model isn’t ‘learning ethics’; it’s learning how to be a highly efficient mirror of a digital sewer. The next AI gold rush isn’t just ‘more data’; it’s epidemiological hygiene — cleaning the ooze before it poisons the system. In robotics we have reached the point where the latency between a flawed value and a physical consequence has shrunk to zero.

4. Defining the Ordinary Wisdom Project

Anthropic is working hard on a broad constitution for its systems, and has released a dataset titled “Values in the Wild,” which provides a comprehensive taxonomy of 3,307 values ranging from accuracy to authenticity and beyond.

This brings me to our work on our Ordinary Wisdom. Ordinary Wisdom began with a question – “could we give AIs a conscience”. Not consciousness. I define conscience as “how you behave when no one is looking”.

Ordinary Wisdom is a bottom-up, “inside-out” alignment platform designed to bridge the gap between machine objectives and human values. We are not reaching for artificial superintelligence, and we are not trying to solve the mystery of consciousness. We are doing something more mundane yet urgent: making the ordinary wisdom of human communities legible to machines.

We are taking back control of alignment from the inside. The project operates on a three-tier technical methodology:

• Inference-Level Alignment: Instead of just governing around the AI with policies, we align the behavior inside the model weights and the inference pipeline.

• Conscious Control: We move from the ‘Primordial Ooze’ of unguided learning to the ‘Intelligent Design’ of behavior. We specify the values — be fair, keep promises, do no harm — and bake them into the conscious control of the AI’s output.

• Verification and Testing: We don’t assume the vendor handled the values; we benchmark whether the model actually embodies them in real-time, physical scenarios.

Our partners include Imperial College, Northeastern University, Trinity College Dublin, and The Royal Institute of Philosophy.

We have assembled a large series of ethical tests ranging from the Trolley Problem, to Agony Aunts, to arbitration judgements.

We benchmark models against these tests using curated portfolios from over 75,000 books, and rising, of the greatest thinkers of all times across numerous cultures.

We test out mini constitutions and codes of conduct such as professional ethics against the same tests.

We are running Monte Carlo analysis to identify minimal efficient frontier portfolios for training purposes.

We are designing a semantic harness for real time control and stress measurement.

This example shows Jane Austen addressing the Trolley Problem. By the way, she is an enthusiastic utilitarian who always pulls the switch.

5. The Two-Layer Solution: Plumbing and Water

Every Physical AI deployment needs two layers top-down and bottom-up.

ISO 42001, published in late 2023, is the world’s first international standard for AI Management Systems. It handles risk assessment, accountability, and impact evaluation. It is the plumbing. It ensures the pipes are the right size, the joints don’t leak, and there is a documented process for when something goes wrong. You absolutely need brilliant plumbing.

But plumbing doesn’t determine what flows through the pipes. That is the Ordinary Wisdom layer — the water quality. It ensures that what actually comes out of the tap is clean, safe, and carries the values you intended, rather than slop from upstream that nobody checked.

Top-down, outside-in governance ensures the system is managed responsibly. Bottom-up, inside-out alignment ensures the system behaves responsibly.

6. Why Physical AI Raises the Stakes

Physical AI is a different beast because physical systems cause physical harm.

A biased loan algorithm is a scandal for the Sunday papers. A biased surgical robot is a tragedy for a family. A chatbot with bad values is annoying; an autonomous vehicle with bad values is a news headline followed by a parliamentary inquiry. You can put up with a chatbot that is right only 90% of the time, but a robot that walks a coffee across you’re white carpet would be put in the skip – you want 99.9999%.

When AI is embedded in the factory, the hospital, or the city, there is no “undo” button. There is no “roll back the model” after the fact.

We are currently sending AI into the world the way we once sent ships to sea: confident in the engineering, vague about the cartography, and surprised when something goes wrong. But the ships had better excuses.

7. The Verdict

So here are two recommendations:

Lean into ISO 42001 for interoperable, exportable top-town control. If your AI system isn’t certified within the next two years, you may be explaining why to people who have subpoena power.

But ISO 42001 alone is necessary, not sufficient. You must also ask: have we specified the values we want this system to embody? Have we tested whether it embodies them? Have we built a pipeline that lets us update those values as society changes? Bottom-up, inside-out engineering should specifically embed values.

If the answer to any of those is “we assumed the vendor handled it” — I have some uncomfortable news about your vendor.

The machines are extraordinary. It is time we gave them some ordinary wisdom. Before they decide they don’t need ours.

Thank you.